Background

This is the eighth part of the series on building highly scalable multi-container apps using AKS. So far in the series we have covered following topics:

- Part 1 : we provisioned a managed Kubernetes cluster using Azure Container Service (AKS).

- Part 2 : Understand basic Kubernetes objects - Kubernetes Namespace.

- Part 3 : Understand basic Kubernetes objects – Pod and Deployment

- Part 4 : Understand Kubernetes object – Service

- Part 5 : Understand Kubernetes Object – init containers

- Part 6 : Manage Kubernetes Storage using Persistent Volume (PV) and Persistent Volume Claim (PVC)

- Part 7 : Externalize SQL Server container state using Persistent Volume Claim (PVC)

This post is about managing secrets in a Kubernetes cluster. We will be focussing on following topics during this post.

- Understand the reasons for using secrets in Kubernetes cluster

- Create secret using Kubernetes manifest

- Register secret in the AKS cluster

- Verify secret in AKS cluster

- Consume secret from the cluster in TechTalks DB deployment while initializing SQL Server 2017 container

- Consume secret from cluster in the TechTalks API init container to initialize the database

- Consume secret from cluster in TechTalks API for database access

Understand the reasons for using secrets in Kubernetes cluster

In enterprise solutions it is quite common to have separation of duties applied to different roles. In Production environments developers are not allowed access to sensitive information. Operations teams are responsible for managing the deployments. It is quite common in such scenarios to distinguish which parts of the application are handled by development teams and which part is handled by operations team. Most common example is the database passwords.

These are managed by operations teams and in most cases encrypted before storing in the target environment. Development team can use these passwords using a pre-configured file path or environment variable or some other means. The development team does not need to know the how the password is generated or the exact contents of it. As long as it can source the password by some means, the application would work fine.

The same approach can be used to externalize the passwords or secrets for different environments like Development / QA / Pre-production / Production etc. Instead of hardcoding the environment specific settings we can externalize them using configurations. Lets see in our case how we can use secrets with Kubernetes.

Create secret using Kubernetes manifest

There are different ways in which secrets can be created. As we had been doing in the earlier parts of this series we will use a Kubernetes manifest file to store the secrets information. First and foremost lets encrypt the password that we have been using for the SA account in TechTalks application.

We need to convert the plaintext password into a base64 encoded string. Run the command shown below to generate the string

echo –n ‘June@2018’ | base64Copy the output of the command. We will store this into the Kubernetes Manifest file.

Notice that we set the kind of Kubernetes object as Secret on line 3. In the metadata section we provide the name as sqlsecret. Finally we provide the data. We can provide multiple elements as part of the same secret in the form of key value pairs. In our case we are specifying only one value for sapassword. With this setup we are ready to store our secrets in the Kubernetes cluster.

Register secret in the AKS cluster

Secrets can be registered into the cluster by running the kubectl create command and specifying the manifest filename. This approach is shown in the Kubernetes Secrets documentation. I use a Powershell script to deploy the complete application and all the files in a directory are used as input at the time of deployment. If you wish to deploy just the single manifest file named sa-password.yml use the command

kubectl apply –f sa-password.yml

Verify Secret in AKS cluster

Once the secret is deployed to the cluster, we can verify it in different ways. First of all lets check using the Kubernetes command line.

kubectl get secrets –namespace aks-part4

We can see the sqlsecret created about 2 hours back. (Took a long time to take the screenshot after creating the secret ) Next we can verify the same using the Kubernetes control plane. Brose to the Kubernetes dashboard and look for secrets at the bottom of the page

We can see the same information in the UI as well. Click on the name of the secret and we will get to the details of it as shown below

The information is the same as what we had provided in the manifest file. lets verify the same in the terminal by using the kubectl describe command

The information matches with what is shown in the UI except for the Annotations part. Now that we know that the secret is available within the Kubernetes cluster, lets turn our focus towards making use of this secret in the services used by our application.

Consume secret from the cluster in TechTalks DB deployment while initializing SQL Server 2017 container

The first place where the secret is used is when we instanciate the SQL Sever 2017 container. This is done as part of the statefulset definition.

Pay close attention to line numbers 29 to 32. Instead of hardcoding the password, we are now reading it from the secrets. We reference the secret by its name sqlsecret and the value using sapassword as the key. In future if the password expires and the operations team replaces the password, the development team does not need to redeploy the container. The new password will be accessible as part of the environment variable to the container. This solves one problem for us with the creation of SQL Server 2017 container. how about the services which uses this container. In our case, the TechTalks API is the one who is dependent on the database and interacts with it.

Consume secret from cluster in the TechTalks API init container to initialize the database

If you remember from the post on init containers, you would recollect that the API container first initializes the database with master data and few initial records. Lets use the secret while calling the initialization script.

Notice line numbers 26 to 30. We use exactly the same approach to extract the secret and store it in an environment variable. This environment variable is then interpolated with the command on line 34. With this step we have removed the hardcoding of sa password from the initialization script in the init container. We still have the connection string inside the TechTalks API container which has the sa password.

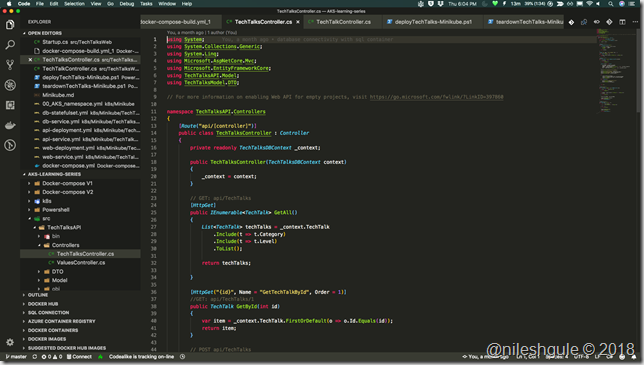

Consume secret from cluster in TechTalks API for database access

Look at the yaml file above from line numbers 42 to 46. We extract the secret and then on line 48 we interpolate it with the connection string using $(SA_PASSWORD). With these modifications in place, we removed all the hardcoding of sa passwords in our code.

I did a quick test by adding a new TechTalk using the applications UI. I can verify that the application is running smoothly.

Conclusion

Secrets management is quite a powerful concept in software development. During the course of this post we saw that Kubernetes provides built in support for managing secrets. By externalizing the secrets, we also make our applications more scalable. We do not need to hardcode secrets into application. Same code can be deployed to multiple environments by using different configurations.

Another advantage of externalizing secrets is that multiple containers can share the same secret in the cluster. In our case SQL Server container and the API container are sharing the secret. If we did not share the secret, next time there is a change in the sa password, we will need to redeploy both the containers.

Secrets play a very important role in building secure systems. Modern day applications built using DevOps practices rely on managing secrets efficiently. Most cloud providers provide secrets management as a dedicated service like Azure Key vault. For on premise scenario there are products like Hashi corps Key Vault. Hope by now you realize the importance of secrets and the ease with which we can manage them with Kubernetes cluster.

This post is dedicated to my friend Baltazar Chua who has been quite persistent in telling me that I should use secrets instead of plaintext passwords for quite a long time now.

As always the complete source code for the post and the series is available on Github.

Until next time, Code with Passion and Strive for Excellence.